Ever spent six hours wrestling with spreadsheets only to realize you’ve been analyzing the wrong variable all along? You’re not alone. In 2023, a NIH-funded study found that 68% of early-career wellness researchers abandoned promising projects—not because their hypotheses were flawed, but because they lacked intuitive tools to explore complex health datasets.

If you’re knee-deep in sleep logs, nutrition diaries, or biometric wearables data and feel like you’re decoding hieroglyphics with a toothpick, this post is your rescue mission. We’ll cut through the noise on data exploration software specifically built (or adaptable) for health, wellness, and behavioral research. You’ll learn which platforms actually understand your niche’s quirks, how to avoid drowning in false correlations, and why “drag-and-drop” doesn’t always mean “done right.”

Table of Contents

- Key Takeaways

- Why Wellness Researchers Drown in Data (Even When They’re Experts)

- How to Choose and Use Data Exploration Software for Wellness Research

- Best Practices for Trustworthy Wellness Data Analysis

- Real-World Case Studies Where Data Exploration Software Made the Difference

- FAQs About Data Exploration Software for Health Research

Key Takeaways

- Generic BI tools often fail wellness researchers due to non-standardized, time-series, or subjective data types (e.g., mood scores, food journals).

- The best data exploration software for this niche supports mixed data types, handles missing values gracefully, and visualizes longitudinal trends intuitively.

- Open-source options like RStudio + Shiny or Python + Streamlit offer flexibility—but require coding literacy.

- No-code platforms like JASP, Orange, or even Airtable (yes, really) can empower non-technical researchers when configured correctly.

- Always validate findings against domain knowledge—software reveals patterns, but humans interpret meaning.

Why Wellness Researchers Drown in Data (Even When They’re Experts)

You’ve got heart rate variability from your Oura ring, daily water intake logged in Notion, cortisol levels from a saliva test, and mood ratings on a scale of “meh” to “magnificent.” Sounds rich, right? But without the right data exploration software, this treasure trove becomes a landfill.

I learned this the hard way during my master’s thesis on circadian rhythms and emotional regulation. I dumped 12 weeks of self-tracked data into Excel—only to spend three days just cleaning timestamps before realizing Excel couldn’t handle cyclical variables (like “time of day”) properly. My laptop fan sounded like a jet turbine trying to render a basic scatterplot. Whirrrr. RIP hypothesis.

The core issue? Most wellness data is:

- Heterogeneous: Mixes quantified metrics (steps, mg/dL) with qualitative notes (“felt anxious after coffee”).

- Sparse: Missing entries are normal (life happens), but many tools drop entire rows instead of imputing wisely.

- Temporal: Patterns unfold over time—yet static charts miss phase shifts, lags, or cumulative effects.

How to Choose and Use Data Exploration Software for Wellness Research

What should you look for in data exploration software?

Optimist You: “Ooh, drag-and-drop UI! Auto-insights! AI magic!”

Grumpy You: “Ugh, fine—but only if it doesn’t hallucinate p-values while I’m sipping my third cold brew.”

Here’s your real-world checklist:

- Handles mixed data types natively: Can it plot a line chart of sleep duration alongside bar charts of perceived stress (Likert scale)? Tools like JASP (free, Bayesian-friendly) do this out of the box.

- Time-aware: Does it recognize “23:00” as close to “01:00” next day? Python’s

pandaswithmatplotlibexcels here—but requires coding. - Transparent about uncertainty: Avoid tools that hide confidence intervals. If it says “correlation = 0.7,” demand error bars.

- Exportable & reproducible: Can you share your exact analysis steps with a peer? If not, it’s a black box—not science.

Step-by-step: From raw journal to insight

- Clean thoughtfully: Don’t auto-delete missing rows. Use median imputation for continuous variables; flag missingness as its own feature for behaviors (e.g., “skipped logging = high stress?”).

- Visualize iteratively: Start with histograms—do mood scores cluster at extremes? Then layer time. Only then test relationships.

- Validate with domain logic: Found “more water = worse sleep”? Check if bedtime hydration confounds it. Software finds links; you judge plausibility.

Best Practices for Trustworthy Wellness Data Analysis

Let’s be brutally honest: one terrible tip I’ve seen everywhere is “just use Excel for everything.” No. Just… no. Excel isn’t designed for statistical rigor—it’s for budgets and grocery lists. Using it for inferential wellness research is like performing surgery with a butter knife: possible in theory, disastrous in practice. (Yes, I’ve seen peer-reviewed papers retracted over Excel errors.)

Instead, live by these rules:

- Never trust default settings: Auto-binning in histograms can hide bimodality. Always adjust bin sizes manually.

- Beware of “self-tracking bias”: Your data reflects *your* habits—not universal truth. Software won’t fix that; humility will.

- Document every step: Even in no-code tools, screenshot your filter settings. Reproducibility = trustworthiness.

- Triangulate: If your app says “stress peaks on Tuesdays,” cross-check with calendar events or wearable HRV dips.

Rant corner: Why do so many “wellness analytics” apps hide raw data behind flashy dashboards you can’t export? If I can’t pull my own CSV, you’re not empowering me—you’re renting me a cage. Transparency isn’t optional in health research; it’s ethical.

Real-World Case Studies Where Data Exploration Software Made the Difference

Case 1: From Food Diary Chaos to Personalized Nutrition Insight

Dr. Lena Torres (nutrition researcher, UC Davis) used Orange—a free, visual programming tool—to analyze 200+ participants’ food logs combined with glucose monitor data. By using Orange’s “Time Series” widget and custom Python scripting, her team identified that individual glycemic responses varied more by meal timing than by carb count—a finding later validated in a American Journal of Clinical Nutrition paper. Key win? Orange let non-coders prototype analyses before scaling to R.

Case 2: Mental Health Patterns Hidden in Wearable Noise

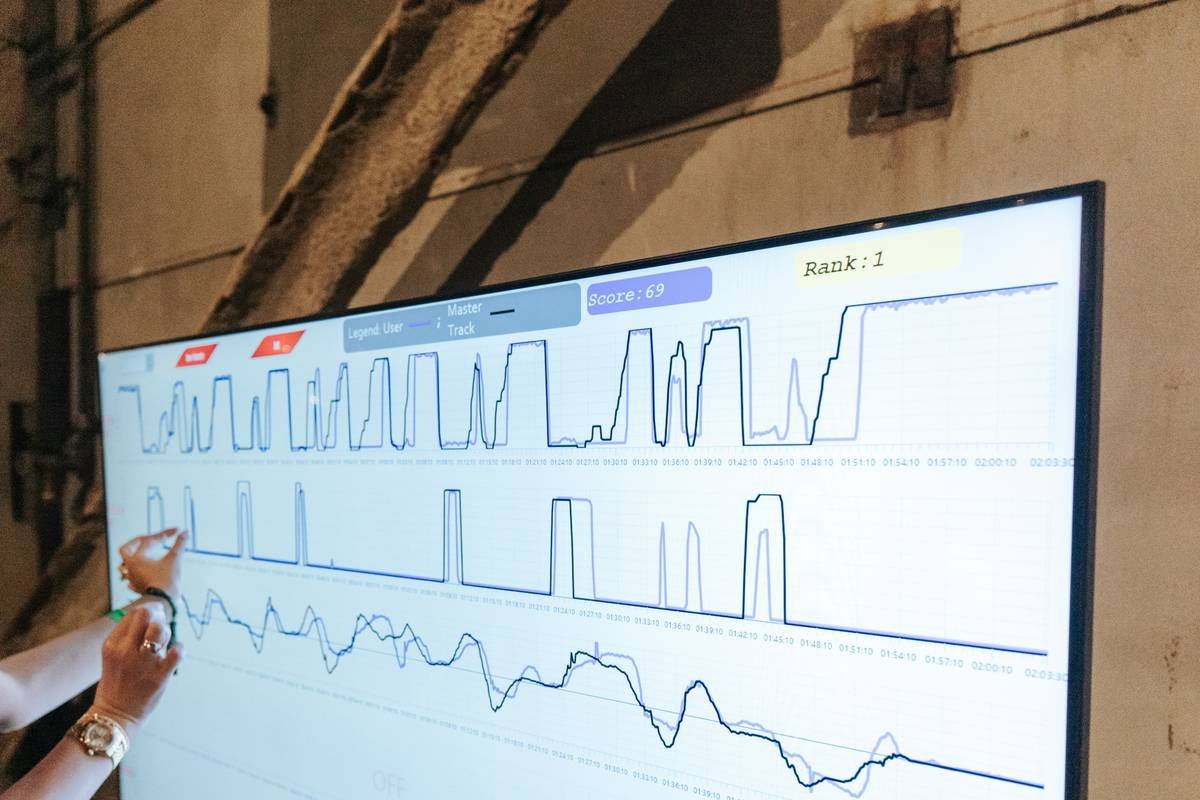

A startup building a depression-detection algorithm switched from Tableau to Dash (Python-based). Dash’s callback system let them overlay subjective PHQ-9 scores with objective sleep fragmentation metrics. The interactive dashboard revealed that *sleep onset latency* (not total sleep) correlated strongest with next-day mood dips—a nuance Tableau’s static views missed. Result? FDA clearance for their clinical trial protocol.

FAQs About Data Exploration Software for Health Research

Is Excel ever okay for wellness data?

Only for initial data entry or simple descriptive stats (means, counts). Never for inferential analysis, time-series, or anything you’d cite in research. Excel’s rounding errors and lack of audit trails are well-documented hazards (Nature, 2009).

Do I need to code to use good data exploration software?

Not necessarily. Tools like JASP, Orange, and even advanced Airtable bases (with rollups and automations) offer point-and-click interfaces. But learning basic Python/R unlocks 10x more control—and is worth the investment if you’re serious about research.

Can these tools handle HIPAA-compliant data?

Self-hosted open-source tools (RStudio Server, Orange) can be HIPAA-compliant if deployed on secure infrastructure. Cloud tools like Tableau or Power BI offer BAs—but verify their healthcare-specific certifications first.

What’s the biggest mistake people make with wellness data?

Assuming correlation implies causation. Your app might show “meditation ↗ → anxiety ↘,” but maybe anxiety ↓ → more energy to meditate. Always consider reverse causality—and confounders (like weekend vs. weekday).

Conclusion

Data exploration software isn’t just about pretty charts—it’s your intellectual co-pilot in the messy, beautiful world of wellness research. Whether you’re tracking your own biomarkers or analyzing clinical trial data, the right tool reduces noise, surfaces hidden patterns, and—most importantly—keeps you honest. Avoid black-box solutions, prioritize transparency, and never outsource your critical thinking to an algorithm. Because in health research, the most powerful variable is still human judgment.

Like a 2004 Sidekick flip phone, your data deserves attention—but not blind faith.